I gave the Peter G. Peterson Distinguished Lecture on “National Security and Fiscal Policy” at the Foreign Policy Association (FPA) in New York last week. Henry Fernandez gave a kind introduction and Tom Michaud moderated with great points. And thanks to FPA for the book edited by Michael Auslin and Noel Lateef, American in the World 2020.

Four years ago, Paul O’Neill gave the Inaugural Peter G. Peterson Lecture. Pete Peterson was in the audience. Paul and I served together in the George W. Bush Treasury, and we became good friends. Paul focused on the deficit in the 2016 lecture; he learned from Pete and I learned from both. Last year, another good friend, John Lipsky gave the Peter G. Peterson Lecture, and he wondered creatively how long the economic expansion would last. It turned out not long, but for reasons that neither John nor anyone else could forecast. What a different time we are in now!

I really like the idea of combining national security and fiscal policy as in the theme of the lecture series. A few years ago, George Shultz (who gave Ethel LeFrak Distinguished Lecture a decade ago at the FPA) edited a book Blueprint for America which has a chapter by Admiral James Ellis, Secretary James Mattis and Director of Foreign and Defense Policy at AEI, Kori Schake. They showed that foreign policy had become unmoored. They called for a “strategy of security and solvency,” showing that economic policy is integral to foreign policy. In sum, we need good economic policy to bolster our diplomatic and defense policies. That means action by the Departments of State, Defense and, don’t forget, Treasury.

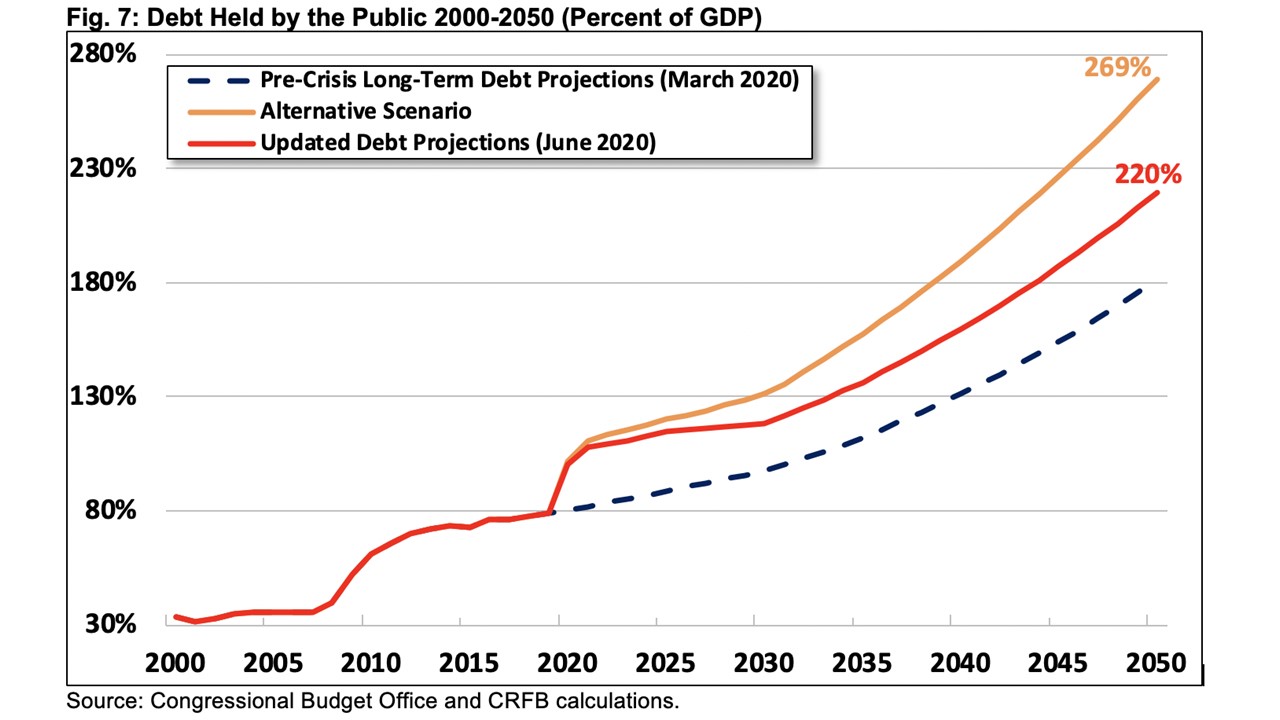

Accordingly, I started the lecture with fiscal policy, and I looked at the response to COVID-19 as federal government outlays as a share of GDP rose from 20% to over 30% in 2020. Projections suggest that outlays will decline temporarily in the next few years, but then rise toward 30% in coming decades with the current fiscal policy.

I argued that an alternative fiscal consolation plan is needed, and this could be put in place whenever the next stimulus package is agreed to. A good plan holds federal expenditures as a share of GDP at about the 20 percent ratio that prevailed before the pandemic. That spending restraint avoids a potentially large increase in federal taxes and prevents the outstanding debt relative to GDP from rising from its current level. The spending restraint would come exclusively from permanent changes in entitlement programs, which are the principal source of the federal government’s long-term fiscal imbalance. Building on my work with John Cogan and Danny Heil, I argued that such a fiscal consolidation plan could become fully effective in fiscal year 2022, after the COVID pandemic has passed, and that the impact would be a substantial increase in real GDP in the short run and the long run. The reason that real GDP rises is that households expect higher after-tax incomes in the future.

Now when you think about fiscal policy you think about tax policy. The proposed consolidation plan does not call for an increase in tax rates so the corporate rate would remain at 21% following the decline from 35% in the 2017 Act. Reduced tax rates on small businesses and individuals would remain. Indeed, there is evidence that these increased productivity growth, which drives up wages. Productivity growth nearly doubled, from 0.8% a year between 2013 and 2016 to 1.5% a year from 2016-19.

When you think about fiscal policy you also think about monetary policy. Here the Fed should return to a basic strategy. It dealt with the onslaught of the pandemic with understandable emergency actions. But the changes put in place in the past 2 months have been too vague. The Fed should return to the rules-based path of 2017-19.

Then there’s regulatory policy. I’ve been struck by how the private sector has already been responding to the new economic restrictions. I am giving a course to 350 students at Stanford. All online. All virtual. Students are all over the world. Thanks Zoom. Facebook will have half of its employees working remotely. On-line retail is booming. We need regulatory policy at the federal and local level which allows more of this.

Then there is trade policy, also key to foreign policy. The problem is how to negotiate down tariffs and other restrictions. The aim should be lower trade barriers. There have also been concerns about supply chains. That is an old problem: People said we needed to produce our own textiles for military uniforms. Well, we can stockpile some things!

In sum, what I tried to show in the Peterson Lecture is why sound fiscal policy and its corollaries in other parts of economic policy—tax, monetary, regulatory, trade policies–are are all essential to our foreign policy. As Ellis, Mattis and Schake said “no country has ever long retained its military power when its economic foundation faltered.” There are also other areas where we can improve. COVID-19 and the economic response have revealed large income disparities in the US. These have foreign policy implications too, and we need to deal with them. Again, the point is that economic policy and foreign policy go together.

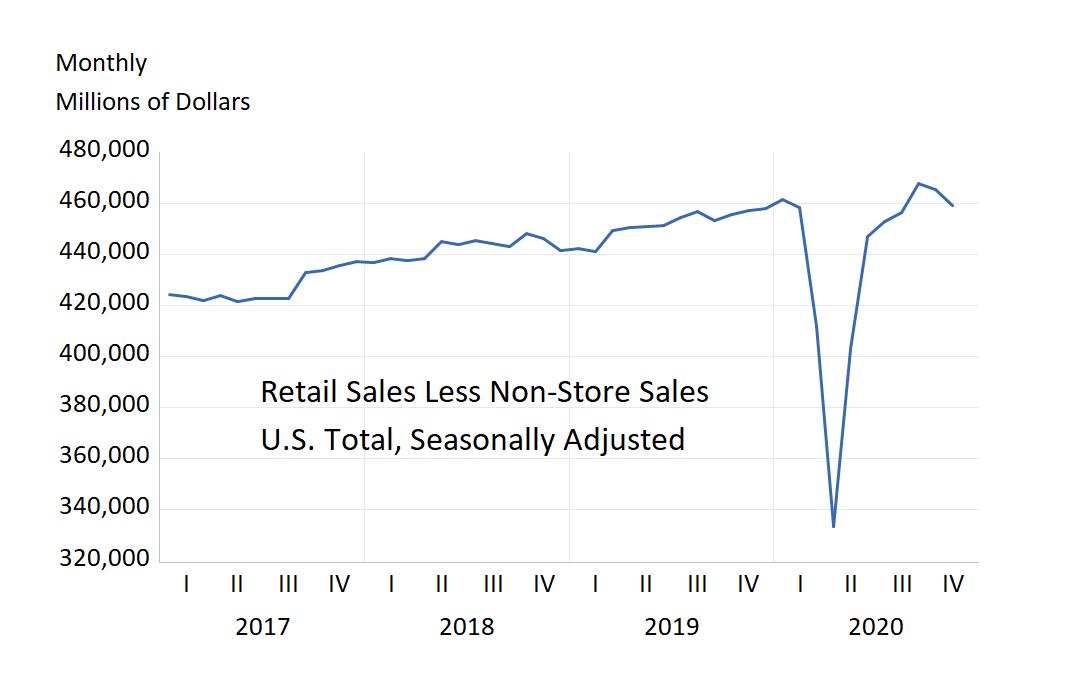

immediately caused a sharp decline in retail sales less non-store sales in the United States. This was followed by rebound in the second third quarter of 2020. Note that the rebound, while very sharp, left total retail sales in stores no greater than they were before the pandemic.

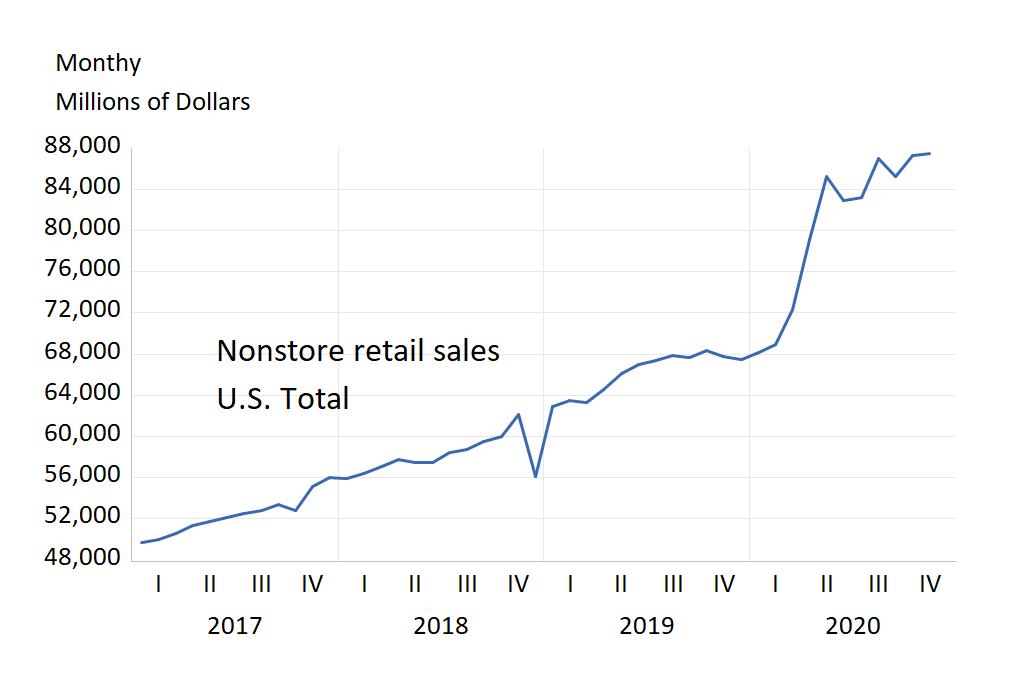

immediately caused a sharp decline in retail sales less non-store sales in the United States. This was followed by rebound in the second third quarter of 2020. Note that the rebound, while very sharp, left total retail sales in stores no greater than they were before the pandemic. that non-store sales increased from the time the pandemic began, just as in-stores sales were collapsing. Without non-store sales, total retail sales would have declined.

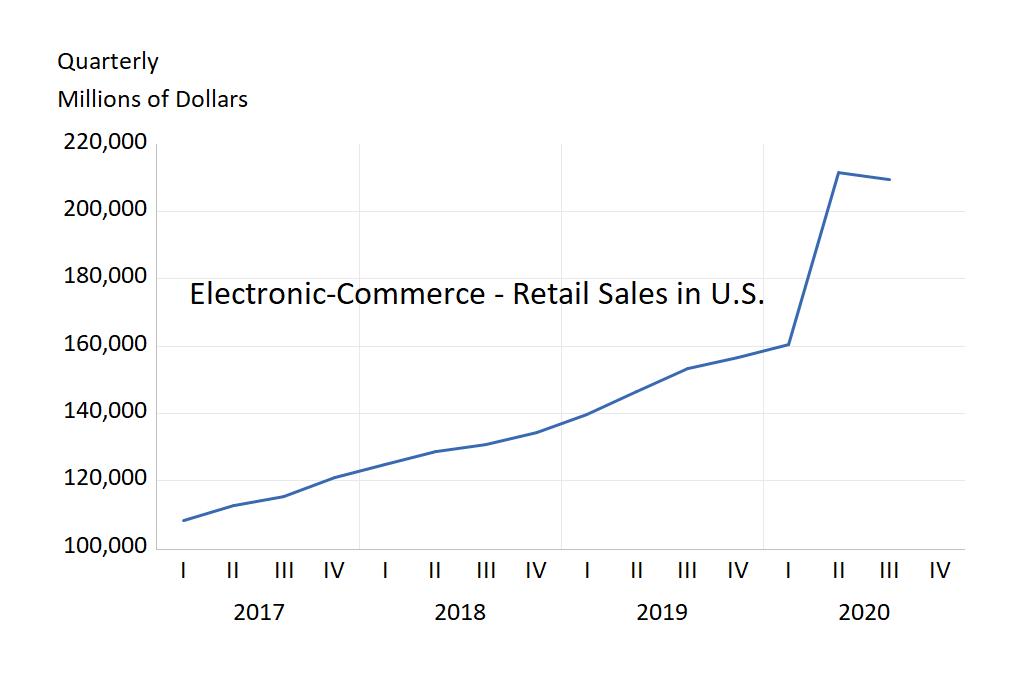

that non-store sales increased from the time the pandemic began, just as in-stores sales were collapsing. Without non-store sales, total retail sales would have declined. same story as the in-store versus non-store story—a large increase starting at the time of the pandemic.

same story as the in-store versus non-store story—a large increase starting at the time of the pandemic.