“Did the crisis reveal that the previous consensus framework for monetary policy was inadequate and should be fundamentally reconsidered?” “Did economic relationships fundamentally change after the crisis and if so how?” These important questions set the theme for an excellent conference at the De Nederlandsche Bank (DNB) in Amsterdam this past week. In a talk at the conference I tried to answer the questions. Here’s a brief summary.

Eighty Years Ago

To understand the policy framework that existed before the financial crisis, it’s useful and fitting at this conference to trace the framework’s development back to its beginning exactly eighty years ago. It was in 1936 that Jan Tinbergen built “the first macroeconomic model ever.” It “was developed to answer the question of whether the government should leave the Gold standard and devaluate the Dutch guilder” as described on the DNB web site.

“Tinbergen built his model to give advice on policy,” as Geert Dhaene and Anton Barten explain in When It All Began. “Under certain assumptions about exogenous variables and alternative values for policy instrument he generated a set of time paths for the endogenous variables, one for each policy alternative. These were compared with the no change case and the best one was selected.” In other words, Tinbergen was analyzing policy in what could be called “path-space,” and his model showed that a path of

devaluation would benefit the Dutch economy.

Tinbergen presented his paper, “An Economic Policy for 1936,” to the Dutch Economics

and Statistics Association on October 24, 1936, but “the paper itself was already available in September,” according to Dhaene and Barten, who point out the amazing historical timing of events: “On 27 September the Netherlands abandoned the gold parity of the guilder, the last country of the gold block to do so. The guilder was effectively devalued by 17 – 20%.” As is often the case in policy evaluation, we do not know whether the paper influenced that policy decision, but the timing at least allows for that possibility.

In any case the idea of doing policy analysis with macro models in “path-space” greatly influenced the subsequent development of a policy framework. Simulating paths for instruments—whether the exchange rate, government spending or the money supply—and examining the resulting path of the targets variables demonstrated the importance of correctly distinguishing between instruments and targets, of obtaining structural parameters rather than reduced form parameters, and of developing econometric methods such as FIML, LIML and TSLS to estimate structural parameters. Indeed, this largely defined the research agenda of the Cowles Commission and Foundation at Chicago and Yale, of Lawrence Klein at Penn, and of many other macroeconomists around the world. Macroeconomic models like MPS and MCM were introduced to the Fed’s policy framework in the 1960s and 1970s.

Forty years ago

Starting about forty years ago, this basic framework for policy analysis with macroeconomic models changed dramatically. It moved from “path space” to “rule space.” Policy analysis in “rule space” examines the impact of different rules for the policy instruments rather than different set paths for the instruments. Here I would like to mention two of my teachers—Phil Howrey and Ted Anderson—who encouraged me to work in this direction for a number of reasons, and my 1976 Econometrica paper with Anderson, “Some Experimental Results on the Statistical Properties of Least Squares Estimates in Control Problems.” The influential papers by Robert Lucas (1976) “Econometric Policy Evaluation: A Critique” and by Finn Kydland and Ed Prescott (1977) “Rules Rather Than Discretion: The Inconsistency of Optimal Plans,” provided two key reasons for a “rules space” approach, and my 1979 Econometrica paper “Estimation and Control of a Macroeconomic Model with Rational Expectations” used the approach to find good monetary policy rules in an estimated econometric model with rational expectations and sticky prices.

Over time more complex empirical models with rational expectations and sticky prices (new Keynesian) provided better underpinnings for this monetary policy framework. Many of the new models were international with highly integrated capital markets and no-arbitrage conditions on the term-structure. Soon the new Keynesian FRB/US, FRB/Global and SIGMA models replaced the MPS and MCM models at the Fed. The objective was to find monetary policy rules which improved macroeconomic performance. The Taylor Rule is an example, but, more generally, the monetary policy framework found that rules-based monetary policy led to better economic performance in the national economy as well as in the global economy where an international Nash equilibrium in “rule space” was early optimal.

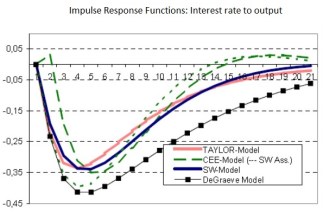

This was the monetary policy framework that was in place at the time of the financial crisis. Many of the models that underpinned this framework can be found in the invaluable archives of the Macro Model Data Base (MMB) of Volker Wieland where there are many models dated 2008 and earlier including the models of Smets and Wouters, of Christiano, Eichenbaum, and Evans, of De Graeve, and of Taylor. Many models included financial sectors with a variety of interest rates; the De Graeve model included a financial accelerator. The impact of monetary shocks was quite similar in the different models, as shown here and summarized in the following chart of four models, and simple policy rules were robust to different models.

Perhaps most important, the framework worked in practice. There is overwhelming evidence that when central banks moved toward more transparent rules-based policies in the 1980s and 1990s, including through a focus on price stability, there was a dramatic improvement compared with the 1970s when policy was less rule-like and more unpredictable. Moreover, there is considerable evidence that monetary policy deviated from the framework in recent years by moving away from rule-like policies, especially during the “too low for too long” period of 2003-2005 leading up to the financial crisis, and that this deviation has continued. In other words, deviating from the framework has not worked.

Have the economic relationships and therefore the framework fundamentally changed since the crisis? Of course, as Tom Sargent puts it in his editorial review of the forthcoming Handbook of Macroeconomics by Harald Uhlig and me, “both before and after that crisis, working macroeconomists had rolled up their sleeves to study how financial frictions, incentive problems, incomplete markets, interactions among monetary, fiscal, regulatory, and bailout policies, and a host of other issues affect prices and quantities and good economic policies.” But, taking account of this research, the overall basic macro framework has shown a great degree of constancy as suggested by studies in the new Handbook of Macroeconomics. For example, Jesper Linde, Frank Smets, and Raf Wouters examine some of the major changes—such as the financial accelerator or a better modeling of the zero lower bound. They find that these changes do not alter the behavior of the models during the financial crisis by much. They also note that there is little change in the framework—despite efforts to do so—to incorporate the impact of unconventional policy instruments such as quantitative easing and negative interest rates. In another paper in the new Handbook, Volker Wieland, Elena Afanasyeva, Meguy Kuete, and Jinhyuk Yoo examine how new models of financial frictions or credit constraints affect policy rules. They find only small changes, including a benefit from including credit growth in the rules.

All this suggests that the crisis did not reveal that the previous consensus framework for monetary policy should be fundamentally reconsidered, or even that it has fundamentally changed. This previous framework was working. The mistake was deviating from it. Of course, macroeconomists should keep working and reconsidering, but it’s the deviation from the framework—not the framework itself—that needs to be fundamentally reconsidered at this time. I have argued that there is a need to return to the policy recommendations of such a framework domestically and internationally.

We are not there yet, of course, but it is a good sign that central bankers have inflation goals and are discussing policy rules. Janet Yellen’s policy framework for the future, put forth at Jackson Hole in August, centers around a Taylor rule. Many are reaching the conclusion that unconventional monetary policy may not be very effective. Paul Volcker and Raghu Rajan are making the case for a rules-based international system, and Mario Draghi argued at Sintra in June that “we would all clearly benefit from enhanced understanding among central banks on the relative paths of monetary policy. That comes down, above all, to improving communication over our reaction functions and policy frameworks.”